Data Engineering

at Scale.

Building the invisible infrastructure that powers modern decision-making. We specialize in the design, construction, and maintenance of high-performance data pipelines that transform raw streams into actionable intelligence.

Resilience is not a feature; it is the foundation.

At East Data Partners, we view **data engineering** as a rigorous discipline of software craftsmanship. Too often, pipelines are treated as temporary bridges—fragile scripts that break the moment a schema changes or a cloud region experiences latency.

Our approach in Kuala Lumpur focuses on decoupling storage from compute, implementing robust error-handling circuits, and ensuring that every byte moving through your system is accounted for. We don't just move data; we secure its integrity from the moment of ingestion to the final analytical output.

Automated Schema Validation

Proactive detection of upstream changes prevents downstream analytical failure, ensuring your BI tools never display corrupted "zombie" data.

Compute Optimization

By leveraging modern **big data platforms**, we minimize idle resource time, directly reducing monthly cloud expenditures while increasing throughput.

Idempotent Pipelines

Our designs ensure that re-running a process never results in duplicated records, maintaining a "single source of truth" across the enterprise.

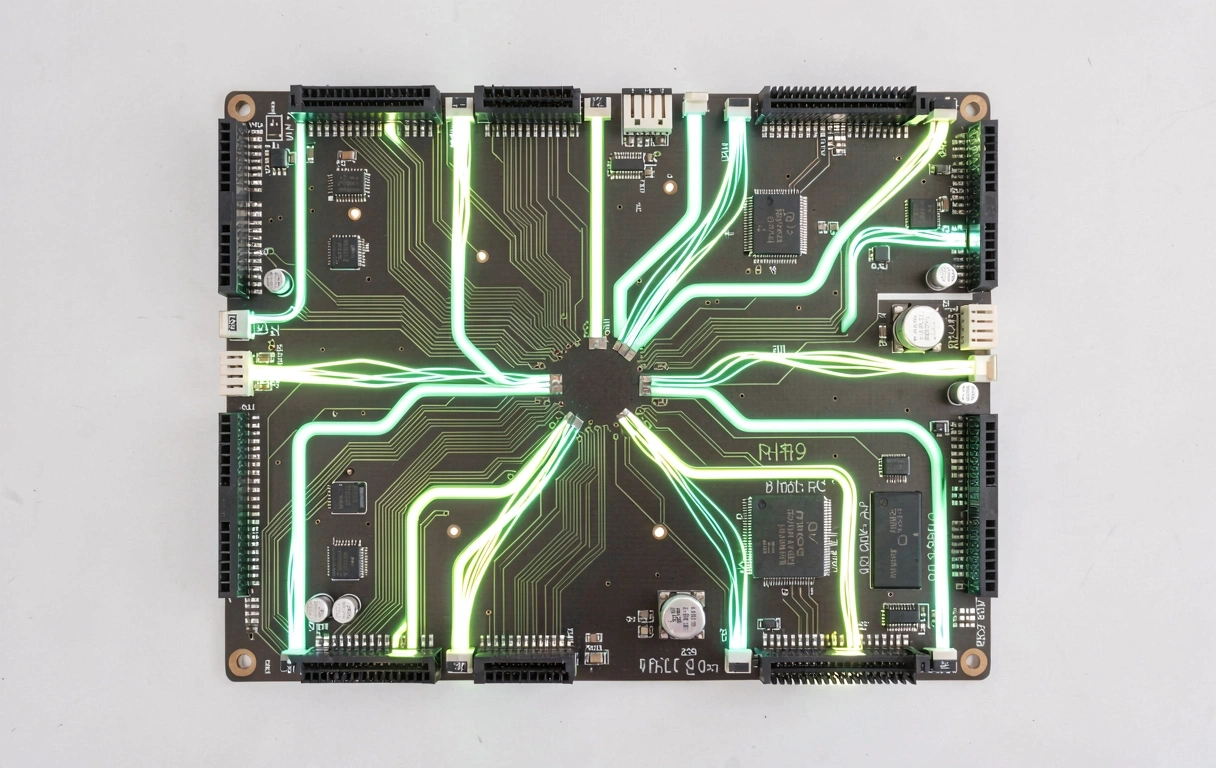

The Engineering Blueprint

A technical breakdown of how we architect data foundations for Malaysian enterprises.

01. Multi-Source Ingestion

We build connectors for diverse environments—from legacy on-premise relational databases to streaming IoT sensors. Using **cloud analytics** ingest patterns, we allow for both real-time pub/sub models and high-volume batch loads without performance degradation.

- Change Data Capture (CDC)

- API Integration Wrappers

- Secure SFTP/S3 Polling

02. Warehouse Automation

Storage is only valuable if it is organized. We automate the DDL generation and partitioning logic within your Data Warehouse (BigQuery, Snowflake, or Redshift), ensuring that query performance remains peak as your dataset grows from gigabytes to petabytes.

- Medallion Architecture (Bronze/Silver/Gold)

- Automated Materialized Views

- Column-level Access Control

03. Orchestration & Monitoring

The "glue" of the system. We implement Airflow or Dagster to manage dependencies across the stack. If a transformation step fails, the system automatically alerts the relevant team while maintaining the state of all healthy processes.

- Directed Acyclic Graphs (DAGs)

- Slack/Teams Integrated Alerting

- Data Quality Observability

System Performance & Maintenance

Maintenance is where engineering proves its worth. A "built-and-forgotten" pipeline is a liability. Our managed engineering services include periodic stress-testing and refactoring to ensure that the infrastructure evolves alongside regional cloud capacity updates.

Tech Stack Alignment

We focus on toolsets that provide long-term vendor neutrality and regional support within Southeast Asia.

Localized Expertise, Global Standards

Sovereignty & Compliance

For enterprises operating in Kuala Lumpur and across Malaysia, PDPA compliance is non-negotiable. Our **data engineering** pipelines are built with integrated masking and encryption-at-rest modules specific to local regulatory requirements.

Learn about our validation process

On-Shore Consultation

Direct access to engineers at Jalan Ampang 300 for complex architectural reviews.

9:00 - 18:00

Operational SyncEmergency break-fix support during MY business hours.

Ready to stabilize your data foundation?

Contact East Data Partners today for a candid audit of your current pipeline efficiency and scalability roadblocks.